How Mamba 3 Reduces AI Costs on Remote Desktop Servers

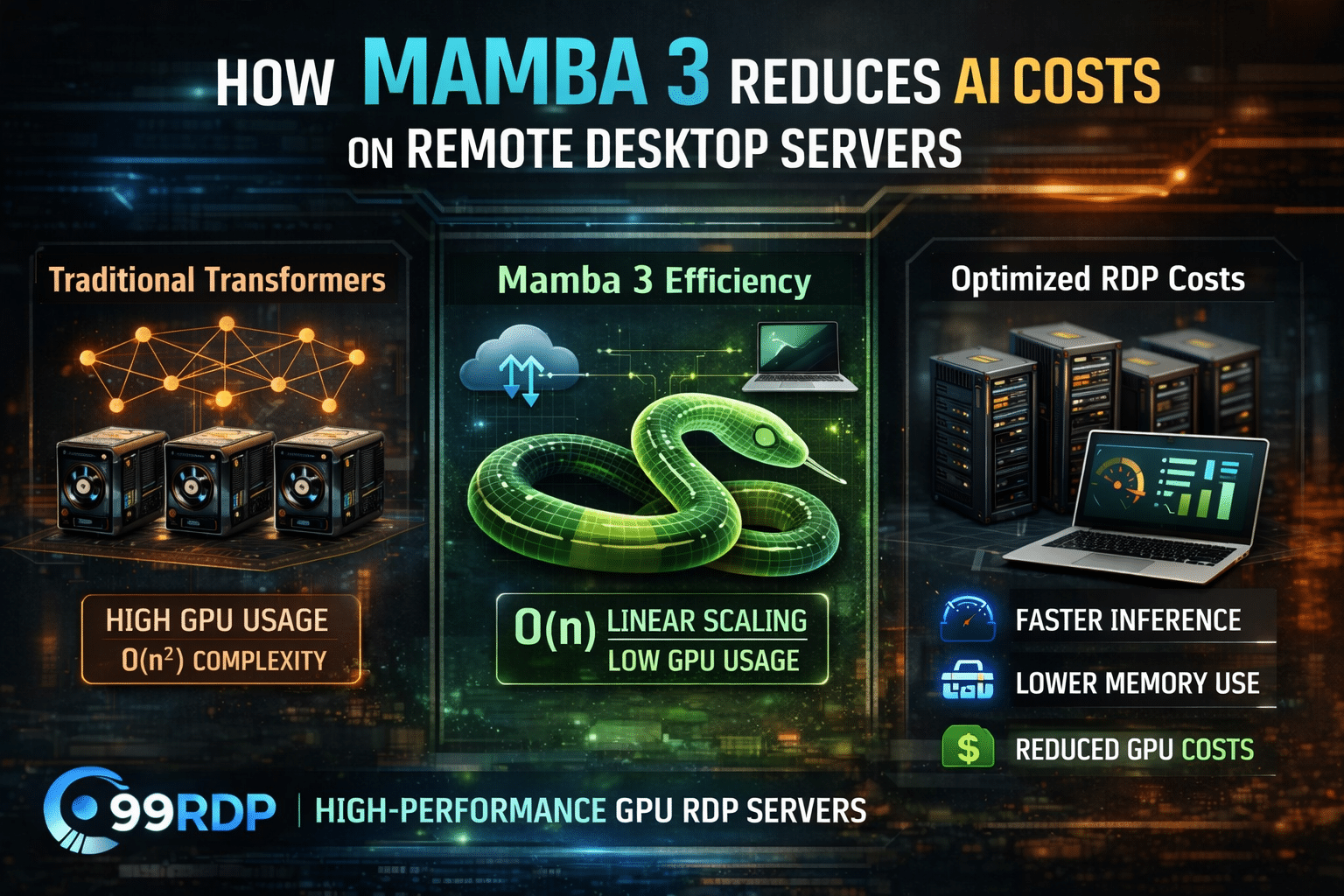

The AI ecosystem is undergoing a significant architectural shift. For years, Transformer-based models dominated the landscape, powering everything from chatbots to large-scale automation systems. However, as workloads scale and infrastructure costs skyrocket, developers are actively seeking more efficient alternatives.

Enter Mamba 3 — a next-generation sequence modeling architecture that is not only competitive with Transformers in performance but significantly more efficient in compute, memory, and cost.

For users leveraging Remote Desktop Protocol (RDP) environments, especially GPU-powered systems, this evolution is more than just a technical upgrade—it’s a cost optimization breakthrough.

In this detailed guide, we explore how Mamba 3 reduces AI costs on remote desktop servers, and how you can strategically deploy it using platforms like 99RDP to maximize performance and ROI.

Why AI Infrastructure is Expensive

Modern AI systems—particularly those based on Transformers—are inherently resource-intensive.

Transformer Bottlenecks

Transformers rely on the self-attention mechanism, which introduces:

- Quadratic time complexity — O(n²)

- Memory usage proportional to input sequence length

- Heavy dependence on high-end GPUs

Infrastructure Impact

For developers running AI workloads on RDP:

- GPU instances become expensive (especially A100/H100 tiers)

- Long-context processing leads to memory overflow or throttling

- Scaling requires multi-GPU setups

Even inference workloads can become costly when deployed at scale.

This is where Mamba 3 changes the equation.

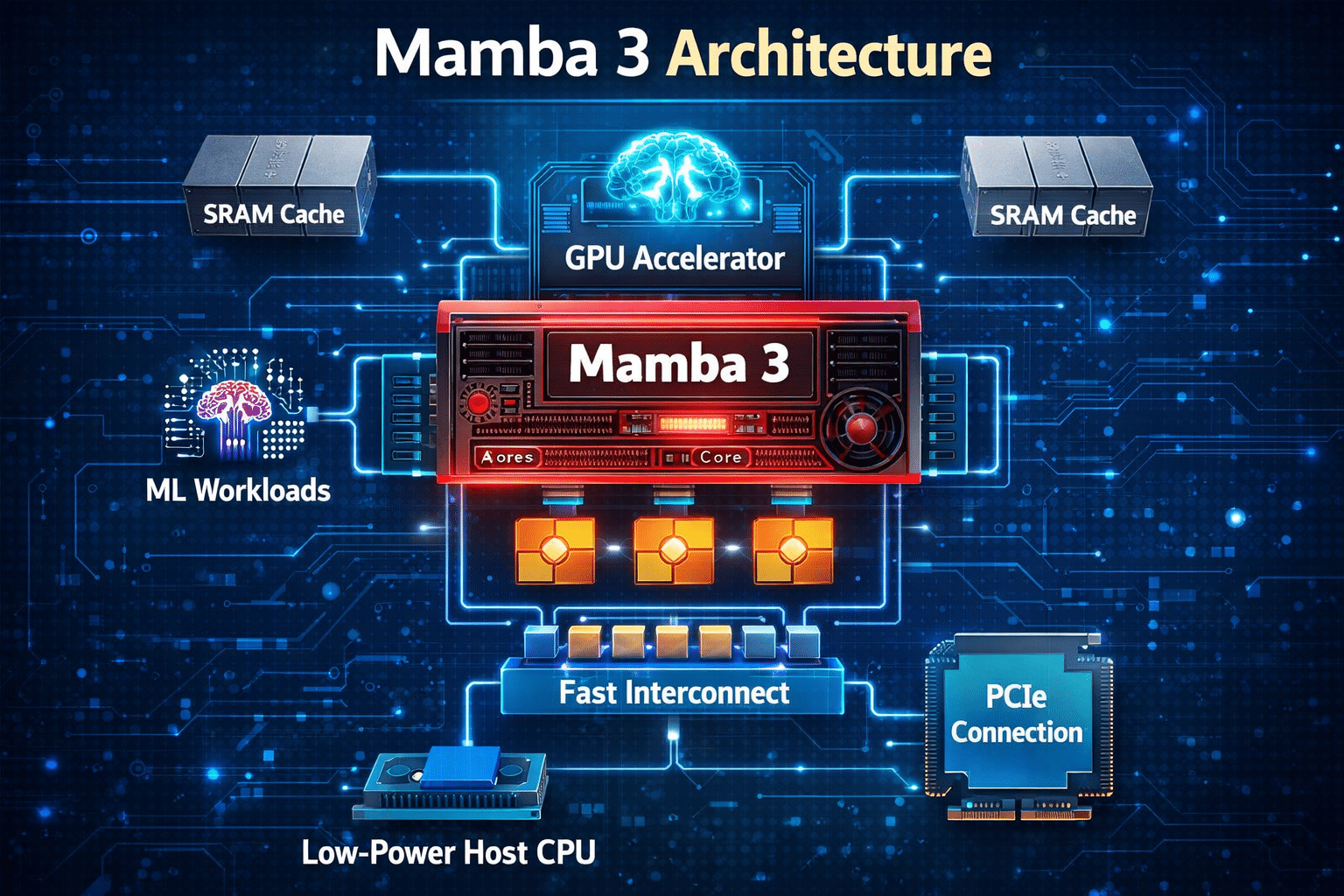

Mamba 3 Architecture: A Technical Overview

Mamba 3 is built on Selective State Space Models (SSMs), offering a fundamentally different approach to sequence modeling.

Instead of processing entire sequences through attention matrices, Mamba:

- Maintains a compact state representation

- Processes inputs sequentially but efficiently

- Eliminates the need for full attention computation

Key Architectural Advantages

1. Linear Time Complexity — O(n)

Unlike Transformers:

- Mamba scales linearly with sequence length

- Performance remains stable even with large inputs

Technical Impact on RDP:

- Reduced GPU cycles per request

- Predictable scaling behavior

2. Constant Memory Footprint

Transformers:

- Store attention matrices → high VRAM usage

Mamba:

- Uses a fixed-size hidden state

Result:

- Minimal VRAM requirements

- Ideal for mid-tier GPU environments

3. Hardware Efficiency

Mamba is optimized for:

- Parallel computation where needed

- Sequential efficiency where beneficial

This hybrid design ensures:

- Better GPU utilization

- Reduced idle cycles

4. High Throughput Inference

Benchmarks indicate:

- Up to 5× higher throughput compared to traditional Transformer models

For RDP users:

- More requests processed per second

- Lower execution time

Cost Optimization on Remote Desktop Servers

Now let’s connect architecture to real-world infrastructure.

1. Reduced GPU Dependency

Transformer workloads often require:

- High VRAM GPUs (≥ 40GB)

- Multi-GPU clusters

With Mamba 3:

- Models can run efficiently on lower-tier GPUs

- Single-GPU setups become viable

RDP Advantage:

- Choose affordable GPU RDP plans

- Avoid over-provisioning

2. Lower Memory Allocation Costs

Memory is a major cost driver in cloud and RDP environments.

Mamba:

- Eliminates attention matrix overhead

- Keeps memory usage stable

Result:

- Reduced RAM/VRAM allocation

- Ability to run multiple workloads simultaneously

3. Faster Execution = Lower Billing Time

Most RDP providers use time-based billing.

With Mamba:

- Faster inference cycles

- Reduced job completion time

Example:

| Task | Transformer | Mamba 3 |

|---|---|---|

| Inference Time | 10 hours | 4–5 hours |

Cost Saving: Up to 50–60%

4. Efficient Long-Context Processing

Long-sequence tasks are extremely expensive with Transformers.

Mamba handles:

- Large documents

- Continuous streams

- Long prompts

RDP Impact:

- No need to split workloads

- Fewer compute cycles

- Reduced overhead

5. Higher Throughput per Instance

Throughput directly impacts ROI.

With Mamba:

- One RDP instance can serve multiple users/tasks

- Higher concurrency without scaling infrastructure

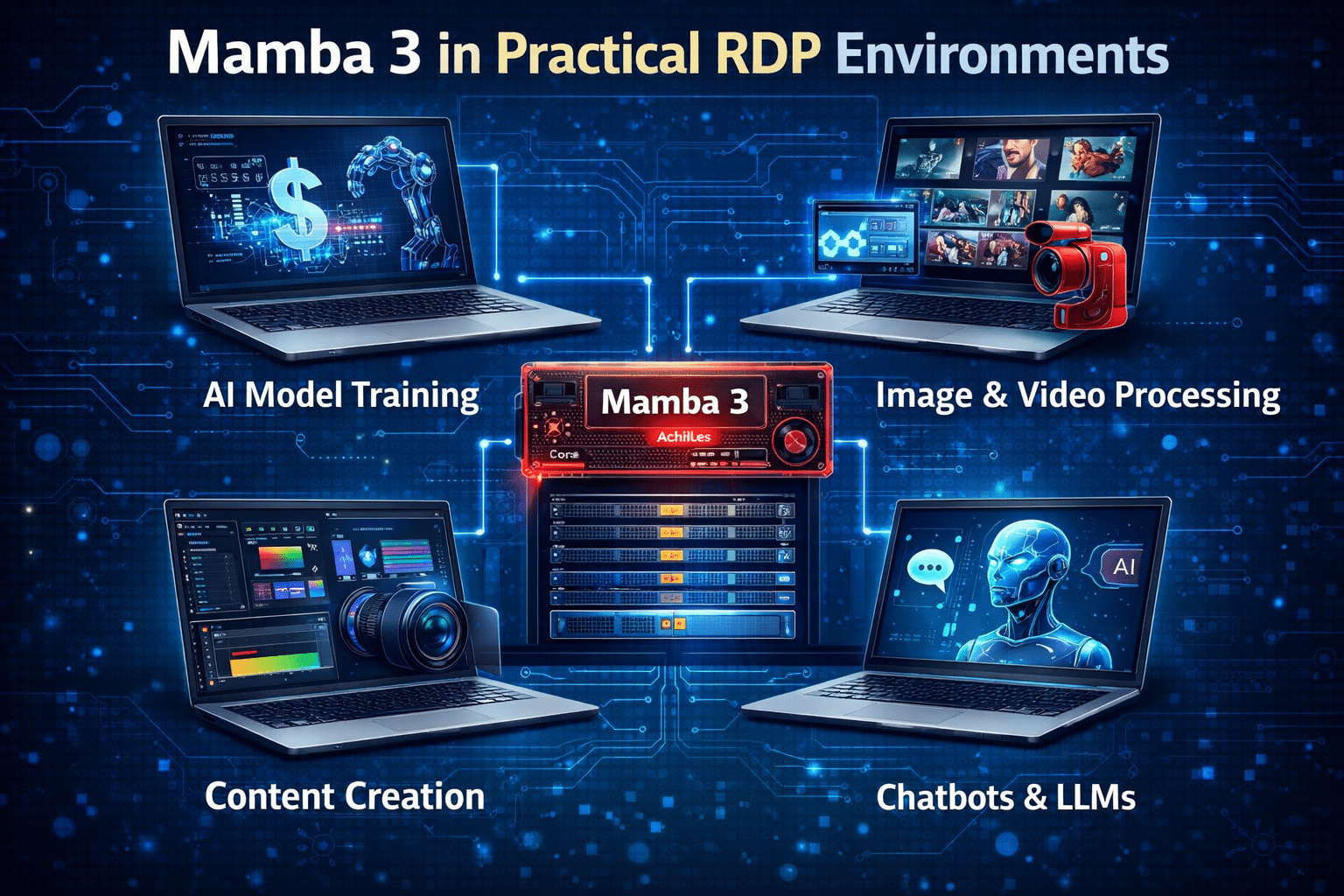

Real-World Deployment Scenarios on RDP

Let’s look at how developers can leverage Mamba 3 in practical RDP environments.

AI SaaS Platforms

- Chatbots

- AI writing tools

- Customer support systems

Benefit:

- Serve more users with fewer GPU resources

Algorithmic Trading Systems

- Real-time decision-making

- Continuous data ingestion

Benefit:

- Lower latency

- Faster execution cycles

Content Automation Pipelines

- SEO article generation

- Bulk content workflows

Benefit:

- Reduced cost per output

- Faster turnaround

Research & Document Processing

- Legal analysis

- Academic research

- Government exam prep tools

Benefit:

- Handle large documents efficiently

Autonomous AI Agents

- Multi-step reasoning systems

- Workflow automation

Benefit:

- Continuous operation with minimal compute load

Why 99RDP is the Ideal Platform for Mamba Workloads

Efficient models require equally efficient infrastructure.

99RDP provides an optimized environment for deploying Mamba-based AI systems.

Key Infrastructure Advantages

GPU-Enabled Remote Desktops

- Run AI workloads without investing in physical hardware

Cost-Effective Pricing

- Combine Mamba’s efficiency with affordable RDP plans

Scalable Resources

- Upgrade CPU, RAM, and GPU as needed

High Availability

- 24/7 uptime for long-running AI jobs

Developer-Friendly Environment

- Full control over configurations

- Ideal for experimentation and deployment

Strategic Insight: Mamba + RDP = Infrastructure Efficiency

The real transformation lies in combining:

Efficient AI Architecture (Mamba 3)

Flexible Cloud Access (99RDP)

Comparative Analysis

| Parameter | Transformer on RDP | Mamba 3 on RDP |

|---|---|---|

| Compute Complexity | O(n²) | O(n) |

| GPU Requirement | High | Moderate |

| Memory Usage | High | Low |

| Throughput | Moderate | High |

| Cost Efficiency | Low | High |

Future Outlook: AI Infrastructure is Evolving

The shift toward architectures like Mamba signals a broader trend:

From:

- Compute-heavy models

- Hardware scaling

- Expensive clusters

To:

- Efficient algorithms

- Smart resource utilization

- Cost-optimized deployments

For RDP users, this means:

- You can build powerful AI systems without massive capital

- You can scale intelligently instead of aggressively

- You can compete with larger players using optimized infrastructure

Conclusion

Mamba 3 represents a paradigm shift in AI deployment economics.

By reducing:

- Computational complexity

- Memory requirements

- GPU dependency

…it enables developers to run advanced AI workloads at significantly lower costs.

When deployed on high-performance platforms like 99RDP, the benefits multiply:

✔ Faster inference

✔ Lower billing cycles

✔ Higher throughput

✔ Scalable infrastructure

Final Takeaway

If you are running AI workloads on remote desktop servers:

Mamba 3 is not just an upgrade—it’s a strategic advantage

And when paired with a reliable RDP provider like 99RDP, it becomes a complete cost-optimization solution

EXPLORE MORE; How to Secure Linux with Fail2ban

READ OUR BLOGS