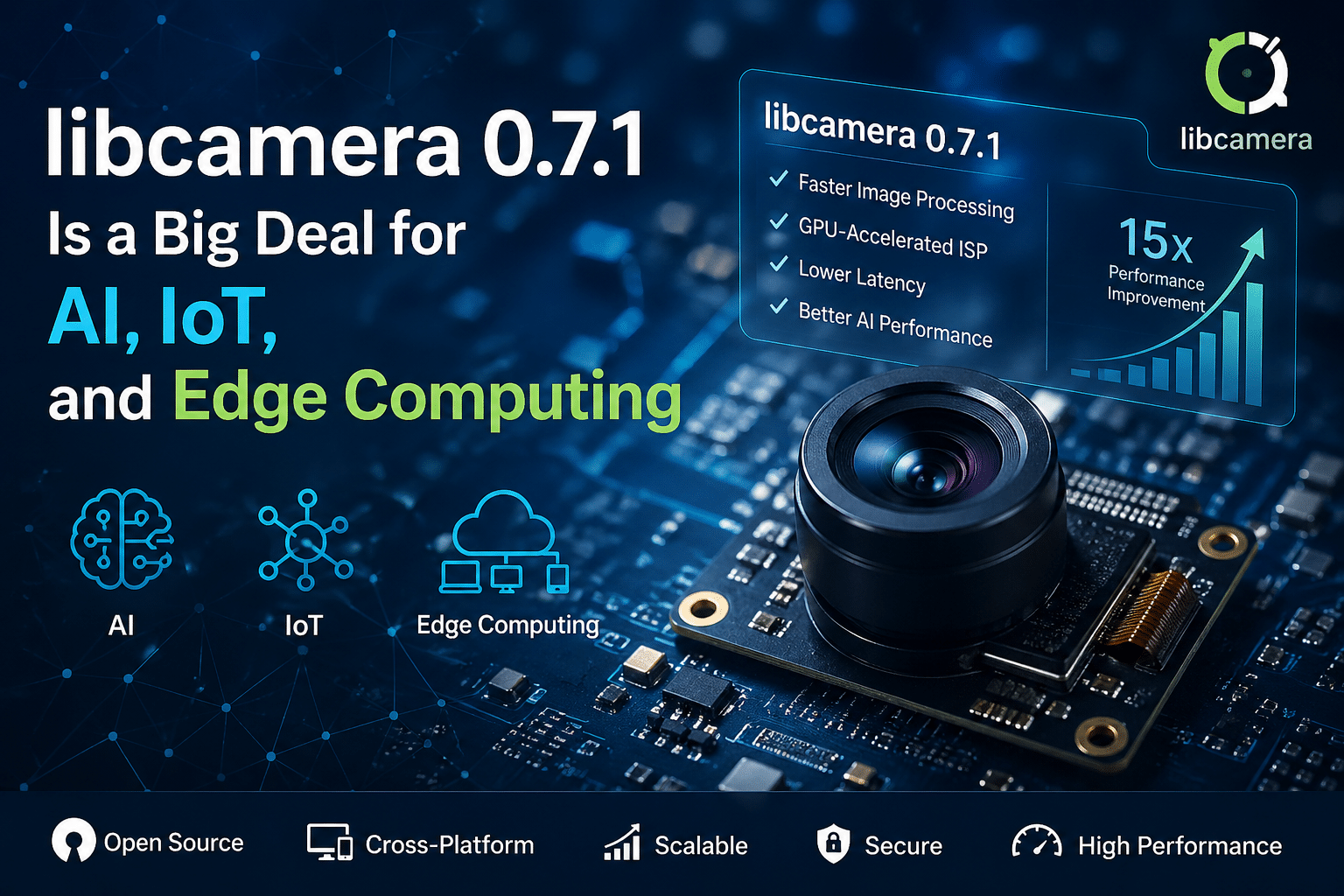

Why libcamera 0.7.1 Is a Big Deal for AI, IoT, and Edge Computing

The release of libcamera 0.7.1 might look incremental at first glance—but in reality, it represents a deeper shift in how modern camera pipelines operate across Linux-based systems. As AI, IoT, and edge computing workloads continue to scale, camera subsystems are no longer just peripherals—they are core data engines powering real-time intelligence.

This update strengthens performance, enhances software-based image processing, and pushes the ecosystem closer to hardware-agnostic, GPU-accelerated vision pipelines. When paired with scalable infrastructure like 99RDP, it unlocks a new class of distributed, high-performance computer vision applications.

Let’s break this down in detail.

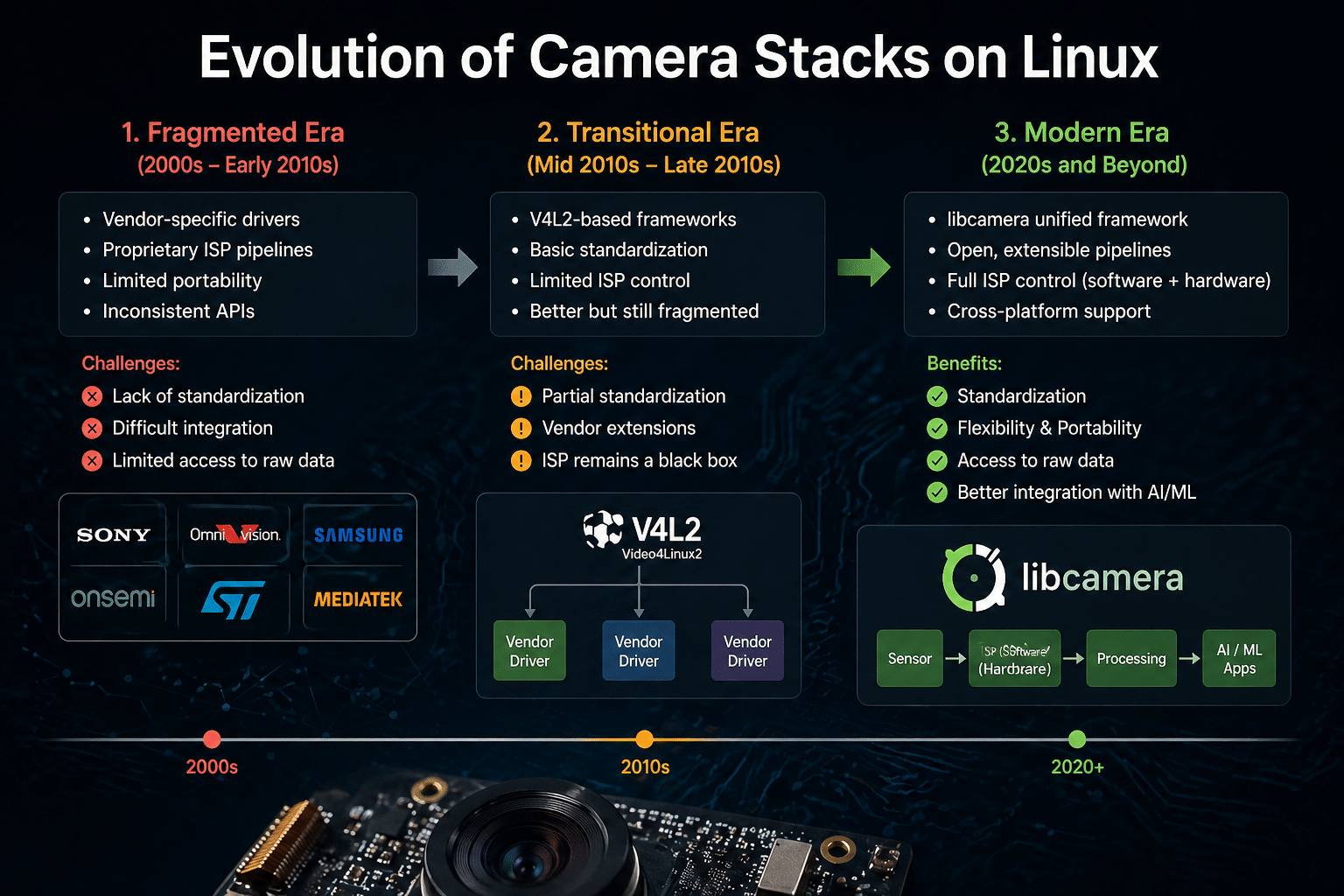

The Evolution of Camera Stacks on Linux

Traditionally, Linux camera support has been fragmented:

- Vendor-specific drivers

- Proprietary ISP (Image Signal Processing) pipelines

- Limited flexibility for developers

This created three major problems:

- Lack of portability across devices

- Limited access to raw image data

- Difficult integration with AI/ML frameworks

libcamera was introduced to solve these issues by offering:

- A unified camera framework

- Hardware abstraction for different sensors

- A flexible pipeline for modern imaging workflows

With version 0.7.1, this vision is becoming more practical and production-ready.

What’s New in libcamera 0.7.1

The 0.7.x series builds heavily on Software ISP (SoftISP)—a key innovation that moves image processing from fixed-function hardware into software.

Key Enhancements:

1. Improved Software ISP Pipeline

- More stable processing pipeline

- Better handling of image transformations

- Enhanced efficiency in resource utilization

2. Optimized Performance Pathways

- Reduced latency in frame processing

- Better scheduling across CPU and GPU

- Improved throughput for multi-camera setups

3. Increased Developer Control

- Greater flexibility in tuning pipelines

- Easier integration with custom AI workflows

These improvements are not isolated—they directly impact AI inference speed, system scalability, and real-time processing capabilities.

The Data Behind the Impact

Earlier benchmarks from the 0.7 release series demonstrated:

- Up to 15× performance improvement in certain ISP workloads

- Significant reduction in CPU bottlenecks

- Better utilization of GPU resources

What This Means Practically:

| Metric | Before | After (0.7.x) |

|---|---|---|

| Frame processing latency | High | Significantly reduced |

| CPU usage | Heavy | Optimized |

| Multi-stream handling | Limited | Scalable |

| Real-time capability | Constrained | Enabled |

This is a step-change improvement, not a marginal upgrade.

Why AI Workloads Benefit Massively

AI-driven applications rely heavily on fast, clean, and consistent image data. libcamera 0.7.1 directly enhances this pipeline.

1. Faster Preprocessing for AI Models

Before AI models can process images, they require:

- Demosaicing

- Noise reduction

- Color correction

SoftISP accelerates these steps.

Result:

- Faster inference cycles

- Lower end-to-end latency

2. GPU Acceleration = Real-Time AI

Modern AI workloads depend on GPUs. libcamera’s move toward GPU-assisted pipelines means:

- Image processing no longer blocks CPU

- AI models receive data faster

- Systems can scale horizontally

This is critical for:

- Autonomous systems

- Smart surveillance

- Industrial inspection

3. Better Data Quality = Better AI Accuracy

AI models are only as good as their input data.

libcamera enables:

- Fine-grained control over image pipelines

- Consistent preprocessing across devices

Result:

- Improved model accuracy

- Reduced training inconsistencies

Why IoT Ecosystems Need libcamera 0.7.1

IoT devices are rapidly evolving from simple sensors to intelligent edge nodes.

Challenges in IoT Vision Systems:

- Limited compute power

- Power constraints

- Real-time processing requirements

libcamera 0.7.1 addresses these challenges:

1. Efficient Resource Utilization

- Offloads processing intelligently

- Reduces unnecessary CPU load

2. Scalable Multi-Device Deployment

- Unified framework simplifies deployment

- Works across diverse hardware

3. Software-Defined Imaging

- No dependency on proprietary hardware pipelines

- Easier updates and maintenance

This is essential for:

- Smart cities

- Retail analytics

- Agriculture monitoring

Edge Computing: Where libcamera Truly Shines

Edge computing is all about processing data closer to the source.

Camera data is:

- High bandwidth

- Latency-sensitive

- Compute-intensive

libcamera 0.7.1 aligns perfectly with these needs.

Key Edge Benefits:

1. Reduced Latency

Processing happens locally or near-edge:

- Faster decision-making

- Real-time responsiveness

2. Lower Bandwidth Usage

Instead of streaming raw video:

- Process locally

- Send only insights

3. Distributed Intelligence

Multiple edge nodes can:

- Process independently

- Scale seamlessly

This is critical for:

- Autonomous vehicles

- Smart factories

- Security systems

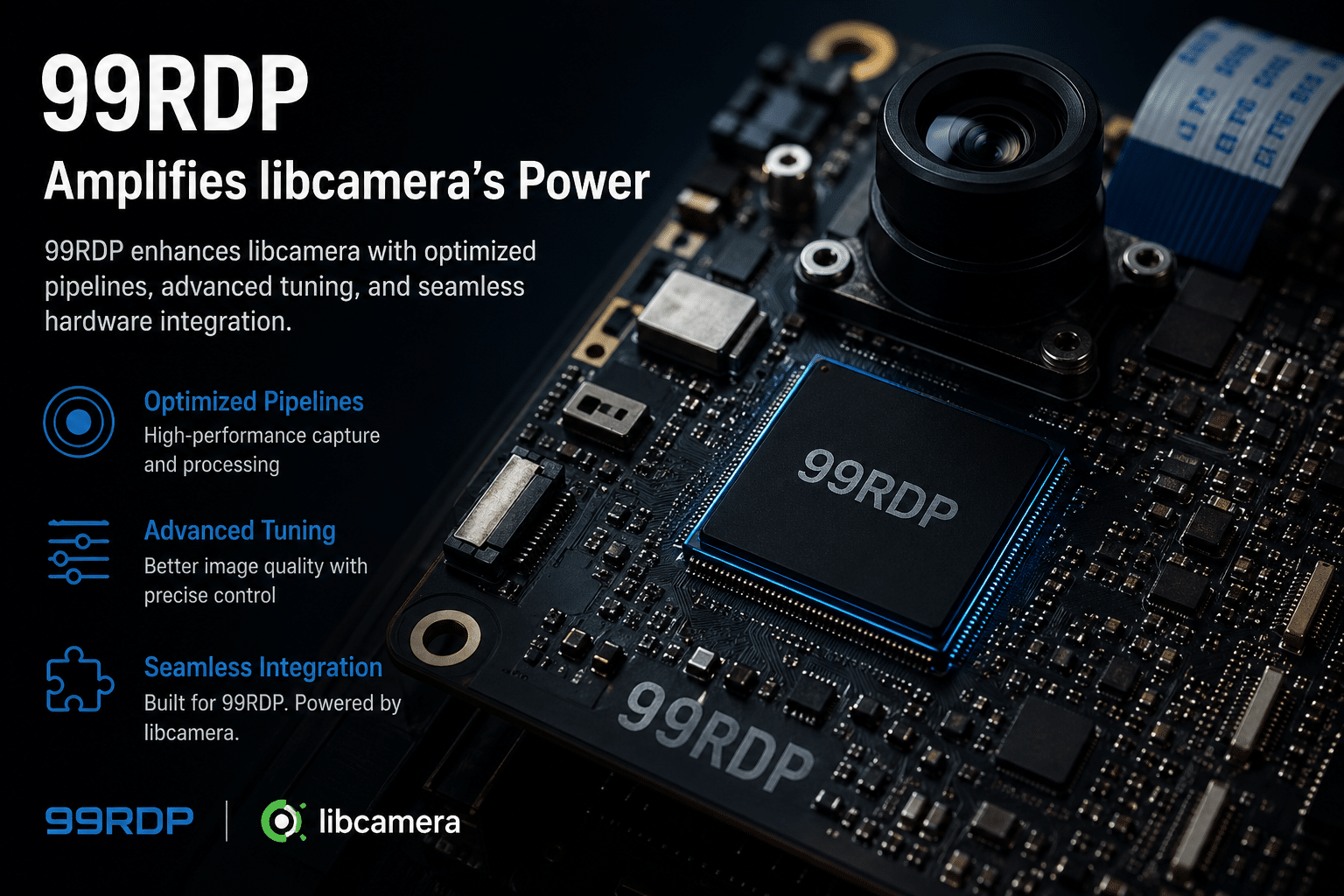

The Missing Piece: Scalable Infrastructure

While libcamera improves software capabilities, infrastructure determines real-world performance.

This is where 99RDP plays a strategic role.

How 99RDP Amplifies libcamera’s Power

1. GPU-Enabled Remote Environments

libcamera’s SoftISP benefits from GPU acceleration.

99RDP provides:

- High-performance GPU-backed servers

- Remote accessibility

Developers can:

- Test pipelines at scale

- Run AI workloads without local hardware limitations

2. Scalable Vision Workloads

Instead of relying on a single device:

- Deploy multiple camera pipelines

- Process them on remote infrastructure

Result:

- Horizontal scalability

- Cost-efficient expansion

3. Remote Development & Deployment

Building AI + vision systems often requires:

- Iterative testing

- Cross-platform compatibility

99RDP enables:

- Remote debugging

- Continuous integration of camera pipelines

4. High Availability for Production Systems

Vision systems often run 24/7.

With 99RDP:

- Reliable uptime

- Secure environments

- Consistent performance

Ideal for:

- Surveillance networks

- Enterprise AI systems

Real-World Use Cases

1. Smart Manufacturing

- Cameras monitor production lines

- AI detects defects in real time

libcamera + 99RDP:

- Faster processing

- Scalable inspection systems

2. Autonomous Systems

- Cameras feed real-time data

- AI models make split-second decisions

Improved pipeline:

- Lower latency

- Better reliability

3. Smart Cities

- Traffic monitoring

- Public safety analytics

Distributed edge + remote compute:

- Efficient scaling

- Centralized intelligence

4. Retail Analytics

- Customer behavior tracking

- Inventory monitoring

Enhanced processing:

- Real-time insights

- Better decision-making

Industry Trend: Software-Defined Vision

libcamera 0.7.1 reinforces a major industry shift:

From:

- Hardware-dependent pipelines

- Closed ecosystems

To:

- Software-defined imaging

- Open, flexible frameworks

Final Thoughts

libcamera 0.7.1 is more than just a version update—it’s a foundation upgrade for the future of vision systems.

It enables:

- Faster image processing

- Better AI integration

- Scalable multi-device deployments

But software alone isn’t enough.

To fully unlock its potential, you need robust, scalable, and GPU-enabled infrastructure.

That’s where 99RDP becomes a critical enabler—bridging the gap between cutting-edge software and real-world deployment.

Bottom Line

- libcamera 0.7.1 accelerates the shift toward GPU-powered, software-defined imaging

- AI, IoT, and edge computing stand to gain massively

- Combining libcamera with platforms like 99RDP creates a future-ready, scalable vision ecosystem

If you’re building next-gen camera-driven applications, this isn’t just an upgrade—it’s an opportunity to rethink your entire architecture.

EXPLORE MORE ; Raw WireGuard vs Tailscale

READ OUR BLOGS